SIGGRAPH 2021 is entirely online this year, and having attended in person in the past, this seems like one of the hardest experiences to translate into an online virtual event. While pretty much everything exhibited at SIGGRAPH is digital, compared to for example an agricultural tradeshow, we are limited in our experience of a virtual event by the display that we see it on at home. It is easy for me to see a video about the new features of a tractor online, but not so much to see the difference with a new display technology. This was an issue when I wrote about HDR content creation as well. How can I show the differences between SDR and HDR in a medium (a website) that is limited to SDR? My favorite part of SIGGRAPH was seeing new 3D displays, and VR headsets, and things like that, and those don’t always translate into a virtual experience.

But NVidia has taken SIGGRAPH as an opportunity to announce a number of new products. On the hardware side, the RTX A2000 GPU has been announced, which is a lower tier professional GPU card with full RTX support. It is a dual slot low profile card, so it will fit into 2U servers, and smaller desktop cases. With 3328 cores, it is nearly on par with a Quadro P5000 in terms of processing power and bandwidth, but with less memory, at 6GB. Sitting between the GeForce 3050TI and the 3060 performance-wise, this card should be powerful enough to meet the needs of most UHD video editors, as a full pro visualization solution for under $500. The fact that it is a low profile PCIe card, and has no external PCIe power connector requirements, makes it even easier to convert a refurbished storage server into a video editing station. I have described this approach in the past, but low profile PCIe slots and PCIe power plugs are usually the limiting factors preventing certain models from being used that way. It utilizes four Mini-DisplayPort 1.4 connectors, which are not my favorite option, but I understand why they are necessary on a card this size, since the second slot is needed for cooling vents.

But NVidia has taken SIGGRAPH as an opportunity to announce a number of new products. On the hardware side, the RTX A2000 GPU has been announced, which is a lower tier professional GPU card with full RTX support. It is a dual slot low profile card, so it will fit into 2U servers, and smaller desktop cases. With 3328 cores, it is nearly on par with a Quadro P5000 in terms of processing power and bandwidth, but with less memory, at 6GB. Sitting between the GeForce 3050TI and the 3060 performance-wise, this card should be powerful enough to meet the needs of most UHD video editors, as a full pro visualization solution for under $500. The fact that it is a low profile PCIe card, and has no external PCIe power connector requirements, makes it even easier to convert a refurbished storage server into a video editing station. I have described this approach in the past, but low profile PCIe slots and PCIe power plugs are usually the limiting factors preventing certain models from being used that way. It utilizes four Mini-DisplayPort 1.4 connectors, which are not my favorite option, but I understand why they are necessary on a card this size, since the second slot is needed for cooling vents.

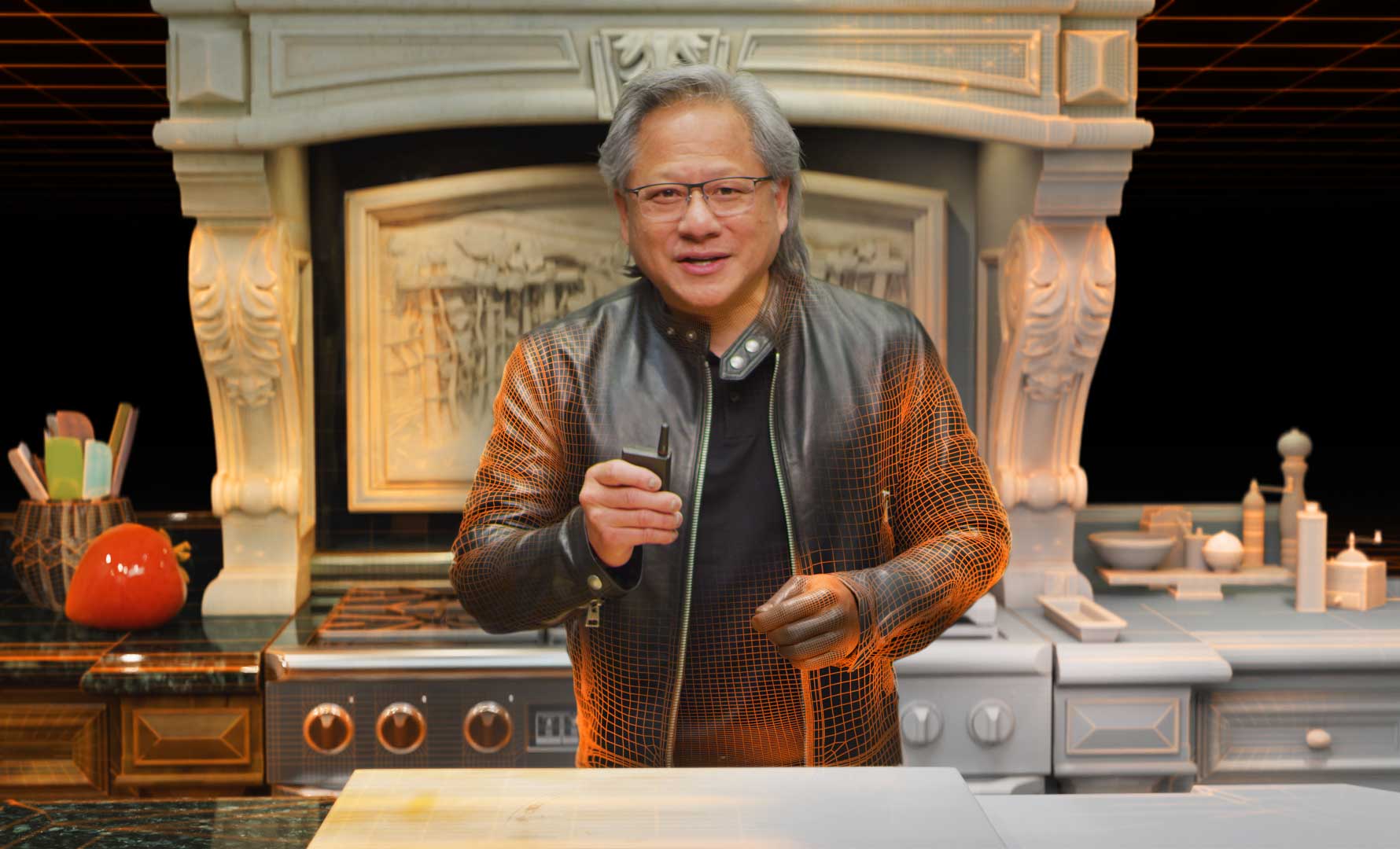

NVidia also shared a Behind the Scenes documentary about how they made this year’s GTC keynote. I watched that keynote back in April, and noticed a few visual effects that surprised me, and I figured must have been accomplished by shooting Jensen in front of a green screen for a virtual kitchen. But I never imagined that the entire thing, including the presenter were fully CGI. (Edit: Because apparently, though the marketing materials implied otherwise, and have been corrected, only the 14 seconds for that visual effect were CGI, although they did match the physical shoots well.) They did an incredible job of matching previous presentations from “Jensen’s Kitchen” which I presume were traditionally shot for past shows. And of course they leaned heavily on Omniverse and their own 3D tools to make it happen.

NVidia also shared a Behind the Scenes documentary about how they made this year’s GTC keynote. I watched that keynote back in April, and noticed a few visual effects that surprised me, and I figured must have been accomplished by shooting Jensen in front of a green screen for a virtual kitchen. But I never imagined that the entire thing, including the presenter were fully CGI. (Edit: Because apparently, though the marketing materials implied otherwise, and have been corrected, only the 14 seconds for that visual effect were CGI, although they did match the physical shoots well.) They did an incredible job of matching previous presentations from “Jensen’s Kitchen” which I presume were traditionally shot for past shows. And of course they leaned heavily on Omniverse and their own 3D tools to make it happen.

Speaking of Omniverse, NVidia has all sorts of announcements surrounding that product. Blender and Adobe Substance Painter now directly support NVidia’s Omniverse solution for tying together complex 3D workflows. OmniSurface is a new extension of their MDL (Material Description Language) interface. And the USD (Universal Scene Descriptor) 3D format now has integrated support for accelerated solid object physics, developed in a partnership between Apple, NVidia, and Pixar. NVidia has also utilized their own Image2Mesh GAN to create GANverse 3D, which converts images of cars into full usable 3D models via AI. It will be interesting to see this technology implemented in other ways as well. At this point, by my understanding, you can model things in Blender, move them through Omniverse to Unreal Engine, render them and edit them together in Resolve, without spending a dollar. This is probably one of many ways for people to learn and explore functional 3D workflows with zero monetary investment. I find that I rarely have the patience for true 3D work, but I do find it interesting to learn about what is happening, and occasionally utilize some of those features for rough temp visual effects in my edits. I have always done those “post-viz” effects in 2D, but with these new tools being developed, it is probably time to start doing some of that work in 3D. Because it is becoming much easier to create quality 3D content than it used to be.