VR headsets have been available for over a year now, and more content is constantly being developed for them. We should expect that rate to increase as new headset models are being released from established technology companies, prompted in part by the new VR features expected in Microsoft’s next update to Windows 10. As the potential customer base increases, the software continues to mature, and the content offerings broaden. And with the advances in graphics processing technology, we are finally getting to a point where it is feasible to edit videos in VR, on a laptop.

While a full VR experience requires true 3D content, in order to render a custom perspective based on the position of the viewer’s head, there is a “video” version of VR, which is called 360 degree video. The difference between “Full VR” and “360 Video,” is that while both allow you to look around every direction, 360 Video is prerecorded from a particular point, and you are limited to the view from that spot. You can’t move your head to see around behind something, like you can in true VR. But 360 video can still offer a very immersive experience, and arguably better visuals, since they aren’t being rendered on the fly. 360 video can be recorded in stereoscopic or flat, depending on the capabilities of the cameras used. Stereoscopic is obviously more immersive, less of a video dome, and inherently supported by the nature of VR HMDs. I expect that stereoscopic content will be much more popular in 360 video than it ever was for flat screen content. Basically the viewer is already wearing the 3D glasses, so there is no downside, besides needing twice as much source imagery to work with, similar to flat screen stereoscopic. While there is a difference between VR and 360 video, I may use the terms interchangeably, since all references to recording or editing VR are actually describing 360 video.

While a full VR experience requires true 3D content, in order to render a custom perspective based on the position of the viewer’s head, there is a “video” version of VR, which is called 360 degree video. The difference between “Full VR” and “360 Video,” is that while both allow you to look around every direction, 360 Video is prerecorded from a particular point, and you are limited to the view from that spot. You can’t move your head to see around behind something, like you can in true VR. But 360 video can still offer a very immersive experience, and arguably better visuals, since they aren’t being rendered on the fly. 360 video can be recorded in stereoscopic or flat, depending on the capabilities of the cameras used. Stereoscopic is obviously more immersive, less of a video dome, and inherently supported by the nature of VR HMDs. I expect that stereoscopic content will be much more popular in 360 video than it ever was for flat screen content. Basically the viewer is already wearing the 3D glasses, so there is no downside, besides needing twice as much source imagery to work with, similar to flat screen stereoscopic. While there is a difference between VR and 360 video, I may use the terms interchangeably, since all references to recording or editing VR are actually describing 360 video.

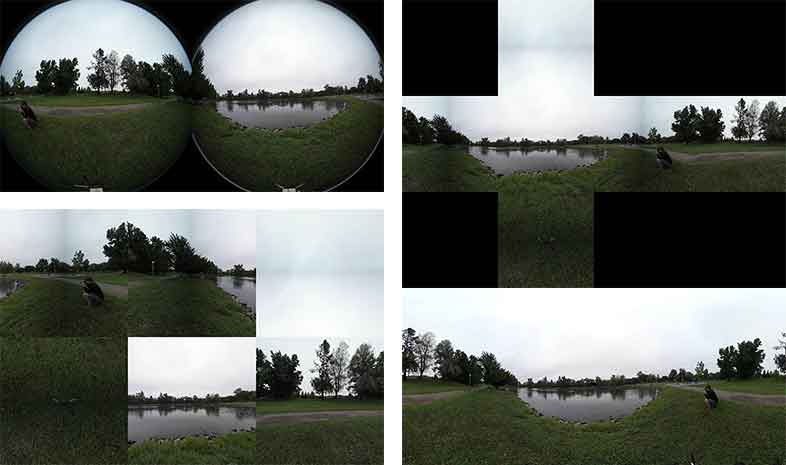

There are a variety of options for recording 360 video, from a single ultra-wide fisheye lens on the Fly360, to dual 180 degree lens options like the Gear 360, Nikon KeyMission, and Garmin Virb. GoPro is releasing the Fusion which will fall into this category as well. The next step is more lens, with cameras like the Orah4i or the Insta360 Pro. Beyond that, you are stepping into the much more expensive rigs with lots of lenses, and lots of stitching, but usually much higher final image quality, like the GoPro Omni or the Nokia OZO. There are also countless rigs that use an array of standard cameras to capture 360 degrees, but these solutions are much less integrated than the all-in-one products that are now entering the market. Regardless of the camera you use, you are going to be recording one or more files in a pixel format that is fairly unique to that camera, that will need to be processed before it can be used in the later stages of the post workflow.

There are a variety of options for recording 360 video, from a single ultra-wide fisheye lens on the Fly360, to dual 180 degree lens options like the Gear 360, Nikon KeyMission, and Garmin Virb. GoPro is releasing the Fusion which will fall into this category as well. The next step is more lens, with cameras like the Orah4i or the Insta360 Pro. Beyond that, you are stepping into the much more expensive rigs with lots of lenses, and lots of stitching, but usually much higher final image quality, like the GoPro Omni or the Nokia OZO. There are also countless rigs that use an array of standard cameras to capture 360 degrees, but these solutions are much less integrated than the all-in-one products that are now entering the market. Regardless of the camera you use, you are going to be recording one or more files in a pixel format that is fairly unique to that camera, that will need to be processed before it can be used in the later stages of the post workflow.

There are many different approaches to storing 360 images, which are inherently spherical, as a video file, which is inherently flat. This is the same issue that cartographers have faced for hundreds of years, in creating flat paper maps of a planet that is inherently curved. While there are sphere map, cube map, and pyramid projection options (among others), based on the way VR headsets work, the equirectangular format has emerged as the standard for editing and distribution encoding, while other projections are occasionally used for certain effects processing or other playback options.

There are many different approaches to storing 360 images, which are inherently spherical, as a video file, which is inherently flat. This is the same issue that cartographers have faced for hundreds of years, in creating flat paper maps of a planet that is inherently curved. While there are sphere map, cube map, and pyramid projection options (among others), based on the way VR headsets work, the equirectangular format has emerged as the standard for editing and distribution encoding, while other projections are occasionally used for certain effects processing or other playback options.

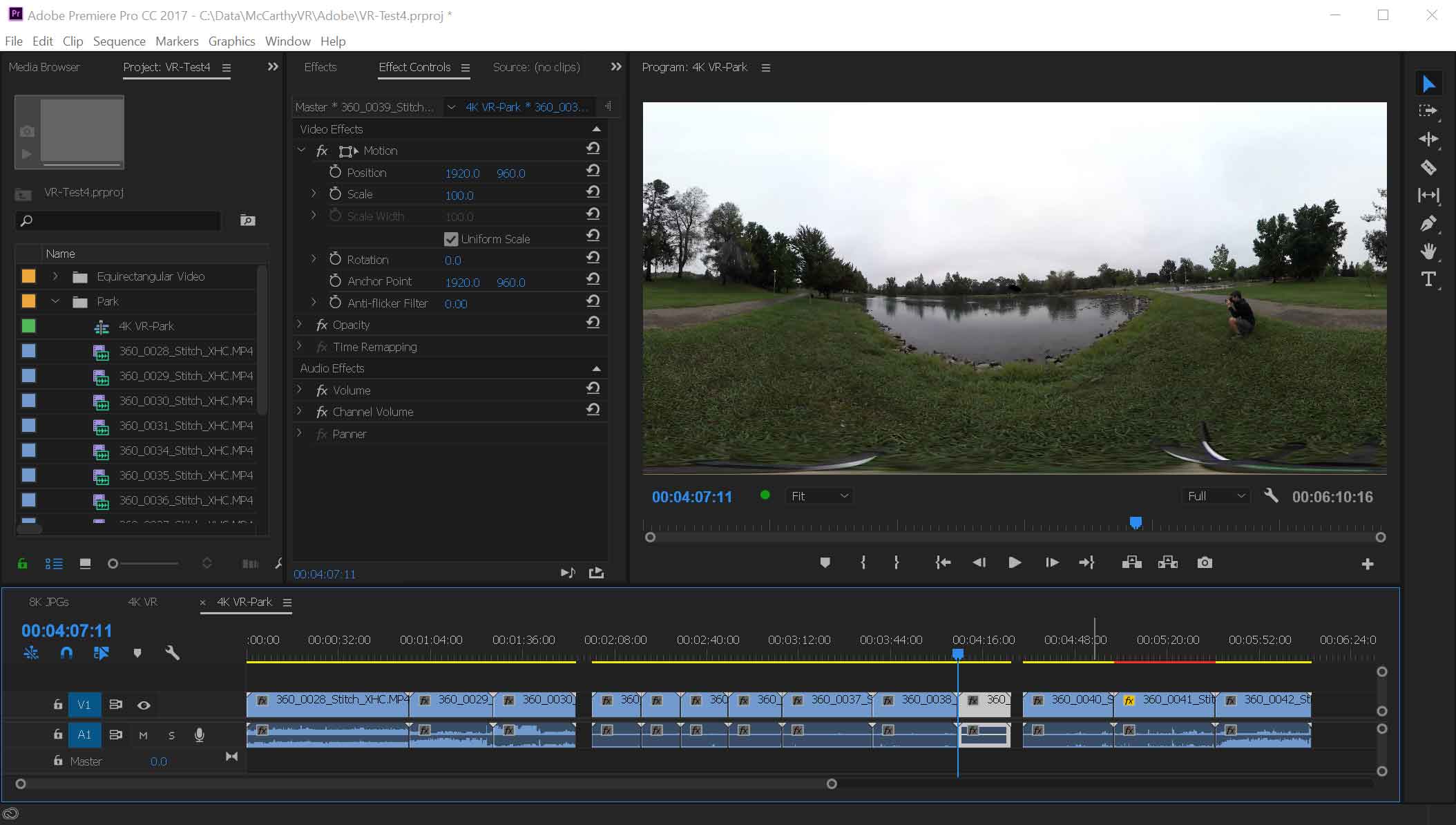

Usually the objective of the stitching process is to get the images from all of your lenses combined into a single frame, with the least amount of distortion, and the fewest visible seams. There are a number of software solutions that do this, from After Effects plugins, to dedicated stitching applications like Kolor AVP and Orah VideoStitch-Studio, to unique utilities for certain cameras. Once you have your 360 video footage in the equirectangular format, most of the other steps of the workflow are similar to their flat counterparts, besides VFX. You can cut, fade, title, and mix your footage in an NLE, and then encode it in the standard H.264 or H.265 formats with a few changes to the metadata.

Usually the objective of the stitching process is to get the images from all of your lenses combined into a single frame, with the least amount of distortion, and the fewest visible seams. There are a number of software solutions that do this, from After Effects plugins, to dedicated stitching applications like Kolor AVP and Orah VideoStitch-Studio, to unique utilities for certain cameras. Once you have your 360 video footage in the equirectangular format, most of the other steps of the workflow are similar to their flat counterparts, besides VFX. You can cut, fade, title, and mix your footage in an NLE, and then encode it in the standard H.264 or H.265 formats with a few changes to the metadata.

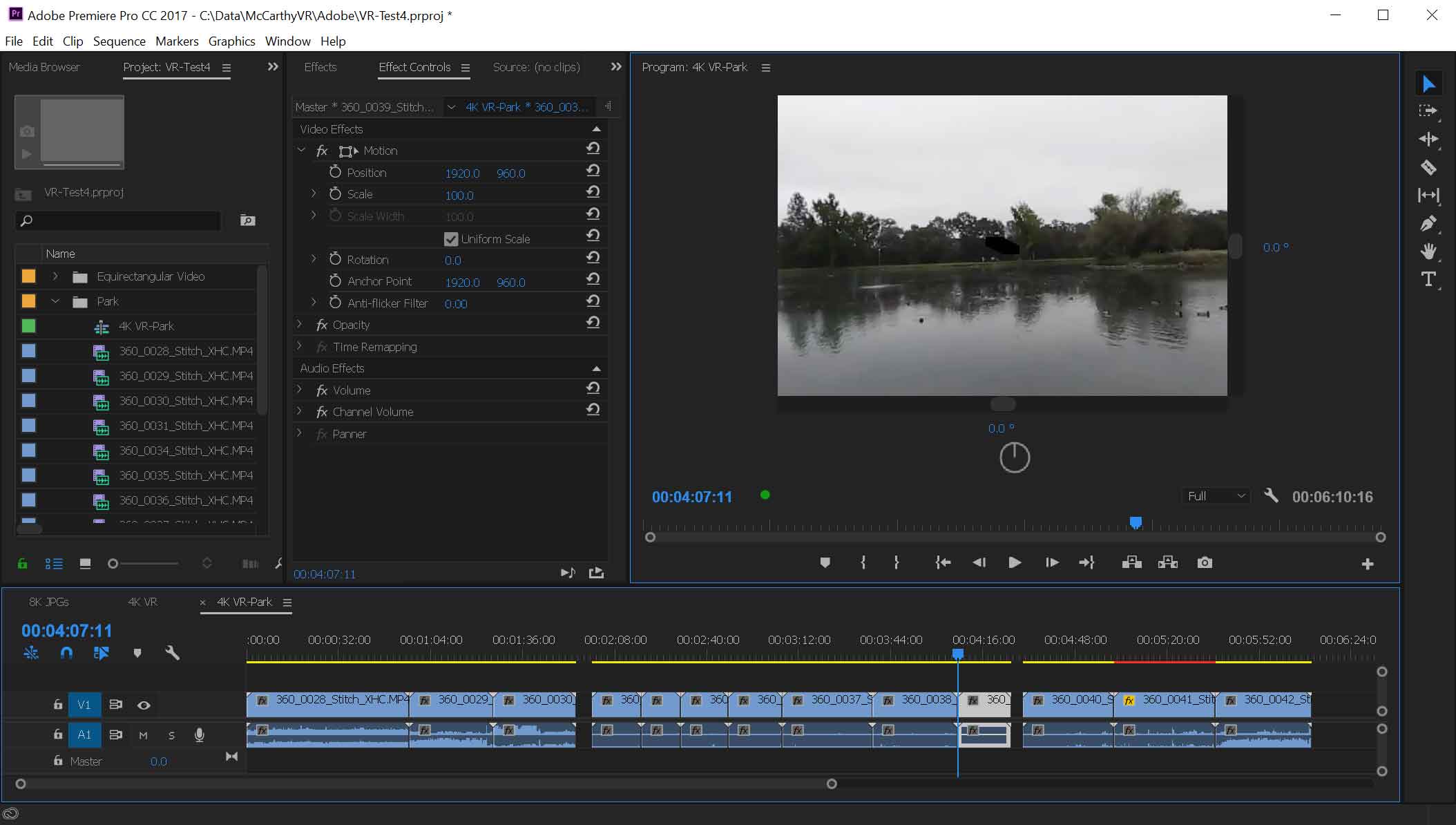

Technically the only thing you need to add to an existing 4K editing workflow, to make the jump to 360 video, is a 360 camera. Everything else could be done in software, but the other main thing you will want is a VR headset or HMD (Head Mounted Display). It is possible to edit 360 video without an HMD, but it is a lot like grading a film using scopes but no monitor. Technically the data and tools you need are all right there, but without being able to see the results, you can’t be confident of what the final product will be like. You can scroll around the 360 video in the view window, or see the whole projected image all distorted, but it won’t have the same feel as experiencing it in a VR headset.

360 Video is not as processing intensive as true 3D VR, but it still requires a substantial amount of power to provide a good editing experience. I am using a Thinkpad P71 with an NVidia Quadro P5000 GPU to get smooth performance during all these tests. So over the next few posts, I will be exploring all aspects of the jump to 360 video, from shooting with a 360 camera and stitching the footage, to editing and finishing it, and getting it encoded and posted to Facebook and YouTube.

Hi Mike Thanks for this. Do you have any workflows for combining traditional footage with 360? For example, have a traditional introduction, then crossfade to 360 footage. If I inject the metadata to this file, it distorts the standard footage. Any way to combine the 2 formats in one YouTube video?

Yes, you can bring both regular and 360 video into the same PPro project. Set the sequence up for your VR footage, and then add your regular footage. You can either scale it small enough that it just works, or you can apply the “Skybox Project 2D” effect in PPro11 or the “VR Plane to Sphere” effect in PPro12. This will place the traditional video in front of the viewer, surrounded by black unless you have a spherical background on a lower layer.