I once again got the opportunity to attend NVidia’s GPU Technology Conference or GTC in San Jose last week. The event has become much more focused on AI supercomputing and deep learning as those industries mature, but there was also a concentration in VR, for those of us from the visual world.

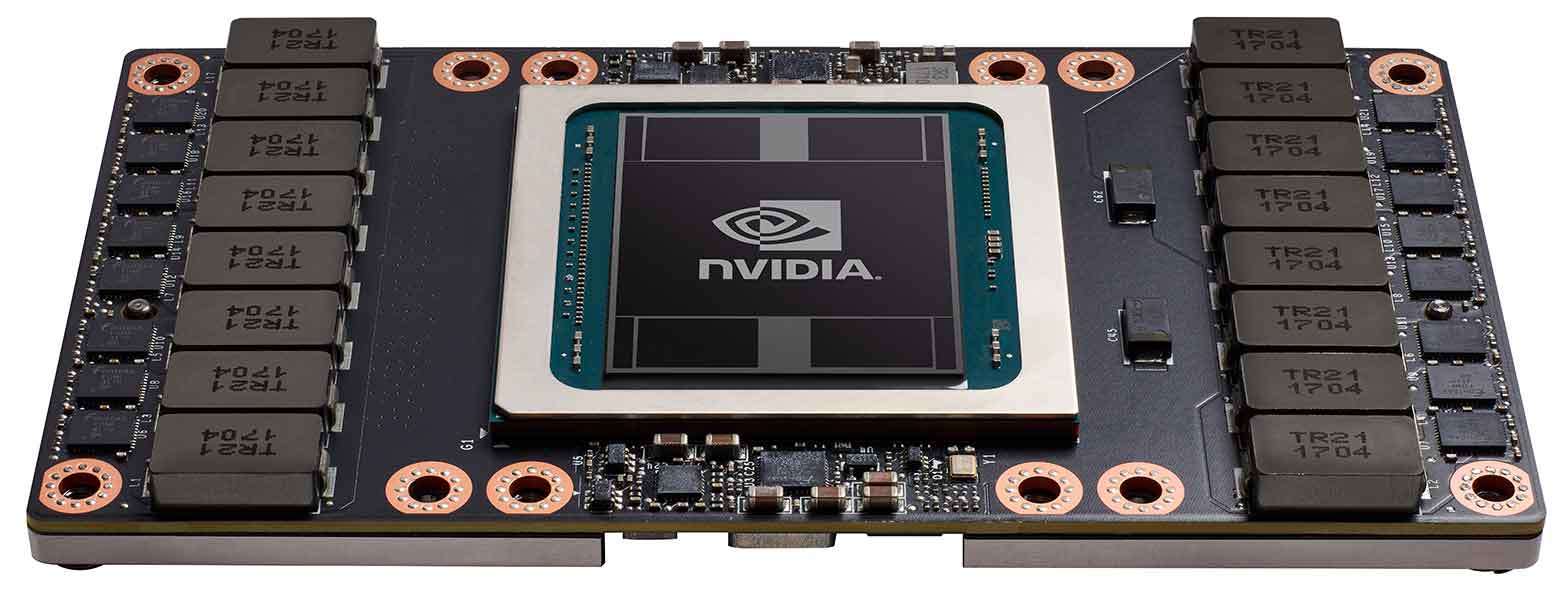

The big news was that Nvidia released the details of their next generation GPU architecture, code named Volta. The flagship chip will be the Tesla V100 with 5120 CUDA cores, and 15Teraflops of computing power. It is a huge 815mm² chip, created with a 12nm manufacturing process for better energy efficiency. Most of its unique architecture improvements are focused on AI and deep learning, with specialized execution units for Tensor calculations which are foundational to those processes. Similar to last year’s GP100, the new Volta chip will initially be available in their SXM2 form factor for dedicated GPU servers like their DGX1, which uses the NVLink bus, now running at 300GB/s. The new GPUs will be a direct swap-in replacement for the current Pascal based GP100 chips. There will also be a 150W version of the chip on a PCIe card similar to their existing Tesla lineup, but only requiring a single half-length slot.

now running at 300GB/s. The new GPUs will be a direct swap-in replacement for the current Pascal based GP100 chips. There will also be a 150W version of the chip on a PCIe card similar to their existing Tesla lineup, but only requiring a single half-length slot.

Assuming that they put similar processing cores into their next generation of graphics cards, we should be looking at a 33% increase in maximum performance at the top end. The intermediate stages are more difficult to predict, since that depends on how they choose to tier their cards. But the increased efficiency should allow more significant increases in performance for laptops, within existing thermal limitations.

NVidia is continuing their pursuit of GPU enabled autonomous cars, with their DrivePX2 and Xavier systems for vehicles. The newest version will have a 512 Core Volta GPU and a dedicated deep learning accelerator chip which they are going to open source for other devices. They are targeting larger vehicles now, specifically in the trucking industry this year, with an AI enabled semi-truck in their booth. They also had a tractor showing off Blue River’s AI enabled spraying rig,

NVidia is continuing their pursuit of GPU enabled autonomous cars, with their DrivePX2 and Xavier systems for vehicles. The newest version will have a 512 Core Volta GPU and a dedicated deep learning accelerator chip which they are going to open source for other devices. They are targeting larger vehicles now, specifically in the trucking industry this year, with an AI enabled semi-truck in their booth. They also had a tractor showing off Blue River’s AI enabled spraying rig, targeting individual plants for fertilizer or herbicide. It seems like farm equipment would be an optimal place to implement autonomous driving, allowing perfectly straight rows, smooth grades, all in a flat controlled environment with few pedestrians or other dynamic obstructions to be concerned about. (Think Interstellar) But I didn’t see any reference to them looking in that direction, even with a giant tractor in their AI booth.

targeting individual plants for fertilizer or herbicide. It seems like farm equipment would be an optimal place to implement autonomous driving, allowing perfectly straight rows, smooth grades, all in a flat controlled environment with few pedestrians or other dynamic obstructions to be concerned about. (Think Interstellar) But I didn’t see any reference to them looking in that direction, even with a giant tractor in their AI booth.

On the software and application front, SAP showed an interesting implementation of deep learning that analyzed broadcast footage and other content looking to identify logos and branding, in order to quantifiably measure the effectiveness of various forms of advertising. I expect we fill continue to see more machine learning implementations of video analysis, for things like automated captioning and descriptive video tracks, as AI becomes more mature.

NVidia also released an “AI enabled” version of I-Ray, to use image prediction to increase the speed of interactive ray tracing renders. I am hopeful that similar technology could be used to effectively increase the resolution of video footage as well. Basically a computer sees a low res image of a car, and says “I know what that car should look like,” and fills in the rest of the visual data. The possibilities are pretty incredible, especially in regard to VFX.

NVidia also released an “AI enabled” version of I-Ray, to use image prediction to increase the speed of interactive ray tracing renders. I am hopeful that similar technology could be used to effectively increase the resolution of video footage as well. Basically a computer sees a low res image of a car, and says “I know what that car should look like,” and fills in the rest of the visual data. The possibilities are pretty incredible, especially in regard to VFX.

On the VR front, NVidia announced a new SDK that allows live GPU accelerated image stitching for stereoscopic VR processing and streaming. It scales from HD to 5K output, splitting the workload across 1-4 GPUs. The stereoscopic version is doing much more than basic stitching, processing for depth information, and using that filter the output to remove  visual anomalies and improve the perception of depth. The output was much cleaner than any other live solution I have seen. I also got to try my first VR experience recorded with a light field camera. This gives the user a 360 stereo look around capability, but also the ability to move their head around to shift their perspective within a limited range (based on the size the recording array). The project they were using to demo the technology didn’t highlight the amazing results until the very end of the piece, but it was the most impressive VR implementation I have had the opportunity to experience yet.

visual anomalies and improve the perception of depth. The output was much cleaner than any other live solution I have seen. I also got to try my first VR experience recorded with a light field camera. This gives the user a 360 stereo look around capability, but also the ability to move their head around to shift their perspective within a limited range (based on the size the recording array). The project they were using to demo the technology didn’t highlight the amazing results until the very end of the piece, but it was the most impressive VR implementation I have had the opportunity to experience yet.