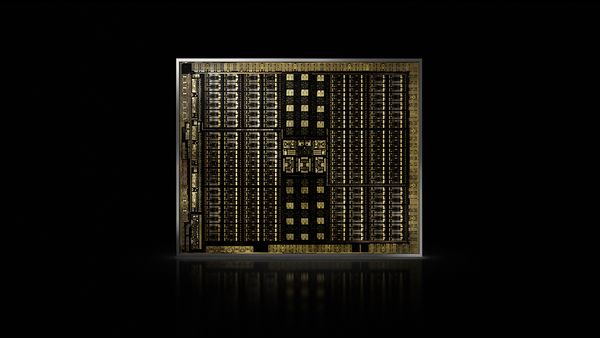

Over the last week or so, NVidia has announced a number of new graphics cards, all based on their new 8th generation “Turing” GPU architecture. This replaces the previous generation of “Pascal” based products that were first introduced over two years ago. “Turing” products bring a number of new features to the table, including Tensor cores, RT cores, and VirtualLink. Tensor cores were first introduced in the Volta series of server chips, for low precision matrix calculations, utilized for pattern recognition and AI processing. RT Cores are a brand new processing pathway optimized for tracing light rays, and VirtualLink is a USB-C based single cable connection for VR headsets, integrated as an out on the qraphics cards.

On the professional front, three new top end Quadro cards were announced. The new generation is branded as Quadro RTX to highlight the new ray tracing acceleration, and the top card is now an 8000, with 48GB frame buffer memory. The 6000 is the next step down, and has the same 4608 CUDA cores and 576 Tensor cores, but half the frame buffer memory, at 24GB. The Quadro RTX 5000 loses 1/3 of the GPU cores, leaving “only” 3072 CUDA cores and 384 Tensor cores, with 16GB of memory.

On the gaming side, the new cards are the GeForce RTX, 2080 Ti, 2080, and 2070. They have similar architecture to the new Quadro cards, but different core counts, and much less memory. My calculations are that they will only be about 10% faster than the previous generation cards at the same levels, at standard single precision processing. The benefits they bring to the table are in the form of increased image quality via new processing methods, including ray tracing, and AI based Deep Learning Anti-Aliasing (DLAA). These should dramatically improve the quality of the rendered images, without necessarily significantly increasing (or decreasing) frame rates. Basically they are passing off certain render tasks to dedicated processing hardware. Many of these tasks are important to improving the VR experience, and all of these cards are designed to meet the needs of VR users. And most of these new cards (besides the 2070) support NVLink as a replacement to SLI, allowing multiple GPUs to share their memory buffer.

It is hard to keep track of all of the new card options, so here is a unified table of the info available so far:

| Card: | CUDA/Tensor Cores | GPU Memory | MSRP |

|---|---|---|---|

| GeForce RTX 2070 | 2304/288 | 8GB-DDR6 | $500 |

| GeForce RTX 2080 | 2944/368 | 8GB-DDR6 | $700 |

| Quadro RTX 5000 | 3072/384 | 16GB-DDR6 | $2300 |

| GeForce RTX 2080 Ti | 4352/544 | 11GB-DDR6 | $900 |

| Quadro RTX 6000 | 4608/576 | 24GB-DDR6 | $6300 |

| Quadro RTX 8000 | 4608/576 | 48GB-DDR6 | $10000 |

While I am looking forward to seeing this technology improving mobile performance as well, I don’t think it will make as much of an impact in that space as the previous two generations. Maxwell was very focused on energy efficiency, and Pascal was able to dramatically increase clock speed, but the step up to Turing is nearly all about the new dedicated processing areas for various tasks, which will not necessarily improve efficiency for standard processing tasks. (Basically, I am no longer going to wait for Turing to hit notebooks before replacing my GeForce 870M based notebook that is reaching the end of its useful life.) So until the software has caught up, to utilize those new dedicated processing features, we won’t see much improvement in efficiency. But there are already steps being taken in that direction, which will apply to video editing. This article outlines how the new Turing architecture is being utilized for decoding and demosaicing Red R3D files for real time 8K playback. I can’t put a Red RocketX in a notebook, but soon I will be able to put a Turing GPU in one, and this is where we will see it making a big difference. And there will be many more potential ways to improve processing performance in the future.